electronics-journal.com

10

'26

Written on Modified on

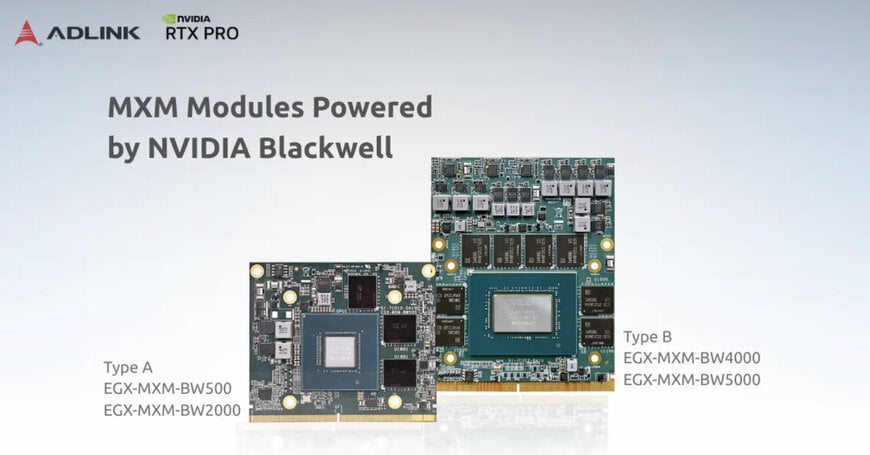

ADLINK Launches High-Performance GPU Modules for Edge AI

Embedded GPU modules based on Blackwell architecture deliver scalable AI acceleration, high-bandwidth memory, and flexible power configurations for industrial edge computing and robotics applications.

www.adlinktech.com

GPU acceleration for industrial edge AI systems

In sectors such as industrial automation, robotics, autonomous mobility, medical imaging and smart infrastructure, the growing use of artificial intelligence requires powerful computing resources that can operate locally at the edge. Systems deployed outside centralized data centers must process large volumes of sensor and image data while maintaining strict constraints on power consumption, thermal design and physical size.

To address these requirements, ADLINK Technology Inc. has introduced a new family of embedded GPU and AI accelerator modules designed for edge computing environments. The modules are built on the NVIDIA Blackwell GPU architecture and are offered in MXM 3.1 Type A and Type B form factors, enabling integration into compact embedded systems used in industrial and mobility applications.

The modules are intended to support AI workloads directly at the edge, including computer vision, deep learning inference, real-time analytics and large language model (LLM) processing.

Scalable performance for diverse deployment environments

Edge AI deployments vary widely in their power, thermal and performance requirements depending on the application. Industrial robots, autonomous vehicles and smart cameras often require high computing performance while operating within limited system power budgets.

The new GPU modules support configurable power ranges from 45 W to 150 W, allowing system designers to adapt performance profiles according to application constraints. This flexibility makes the modules suitable for both compact embedded systems and higher-performance industrial computing platforms.

For example, one module configuration provides up to 100 W of GPU performance within a compact MXM Type A form factor measuring 82 × 70 mm, enabling high compute density in space-constrained embedded devices.

GPU partitioning for parallel AI workloads

The higher-performance models incorporate Multi-Instance GPU (MIG) technology, which enables developers to divide a single GPU into multiple independent instances. Each instance can run separate workloads with isolated resources, improving GPU utilization and enabling parallel processing of multiple AI tasks.

This capability is particularly useful in edge AI environments where different applications—such as video analytics, sensor fusion and predictive maintenance algorithms—must run simultaneously on the same hardware platform.

Blackwell architecture for AI inference acceleration

The modules integrate the latest GPU processing technologies designed to accelerate AI inference and high-performance computing tasks.

Support for FP4 precision AI acceleration enables improved throughput for AI inference while reducing memory bandwidth requirements. The architecture combines CUDA cores, fourth-generation ray tracing cores and fifth-generation tensor cores, enabling GPU acceleration for machine learning pipelines, computer vision workloads and real-time data processing.

Peak computing performance ranges from 9.2 TFLOPS to 49.8 TFLOPS (FP32) depending on the module configuration.

High-bandwidth memory for data-intensive workloads

All modules incorporate next-generation GDDR7 memory, designed to provide high bandwidth and low latency for AI workloads that require rapid data movement between memory and GPU processing cores.

Depending on the configuration, the memory subsystem supports up to 24 GB of GDDR7 memory, memory interfaces up to 256-bit, and memory bandwidth reaching up to 896 GB/s. These specifications allow embedded systems to process high-resolution images, deep learning models and complex simulations efficiently in edge environments.

Multi-display support and industrial reliability

The GPU modules support up to four DisplayPort 1.4a outputs, enabling multi-display visualization for applications such as industrial control rooms, transportation systems and autonomous vehicle monitoring interfaces.

Designed for industrial deployments, the modules support wide operating temperature ranges, including standard operation between 0 °C and 55 °C and extended operation between −40 °C and 85 °C for harsh environments.

In addition, the platform is designed with five-year product availability, allowing equipment manufacturers to maintain long lifecycle support for embedded systems deployed in industrial infrastructure.

By combining high-performance GPU computing with compact form factors and configurable power profiles, the new modules are intended to support the development of next-generation edge AI systems across industrial and mobility sectors.

www.adlinktech.com

Edited by Industrial Journalist, Natania Lyngdoh.

Powered by AI.